Implementing a vulnerability Waiver Process for infected 3rd party libraries

Posted on March 18, 2021 by Adrian Wyssmann ‐ 9 min read

Vulnerable 3rd party libraries

When developing software you mostly rely on 3rd party libraries. For these libraries - as every software - sooner or later vulnerabilities will be detected and reported, which also poses a risk to the software using these libraries. Handling of infected (vulnerable) 3rd party libraries seems easy, as once detected they should be replaced with non-infected ones. However this is not always possible, mainly for 2 things:

- there is not always a non-vulnerable library available (yet), but your software shall keep running

- thorough testing and/or refactoring may be required in your software to be able to use a newer version of the library

So depending on the risk, the work priorities and the resources in yur company, addressing vulnerable 3rd party libraries can be challenging. It may be that fixing it is not possible or not necessary (yet). However I believe it’s essential for any company to understand where are such risks and how they are mitigated or not.

Have some tooling in place

At my current employer we have a lot of self developed Software, both customer facing and non-customer facing, but until recently we were flying a bit blind. So during the last year we added JFrg Xray as a scanner and improved our ci so that every ci run also scans for vulnerabilities and reports them back to the developers. In addition - as using JFrog Artifactory as artifact repository for everything - we started to enable scanning of each repository.

Latter is possible cause our software developers cannot consume libraries directly from the internet, but they have to use artifactory, which basically proxies the remote repositories from internet. The advantage of this is obvious: you have one single point, where you can control what is available to the developers or not - means you can block certain artifacts like docker images from being used (blacklist-approach) or you can even say I only allow certain artifacts to be consumed (whitelist-approach).

So what now? Waiver and Waiver requests?

So now we know what vulnerable components and libraries we have, and where they are used. But how to prevent them to be used in an un-controlled way? We need a solution which

- is able to block any vulnerable artifact from being deployed to Pre-Production and Production environment without being “waived”

- give the developer a standardized way of documenting and requesting waivers

- having a formal documentation of the acceptance for waivers

Especially in more regulated environments like Finance or Medical Devices, a proper documentation is mandatory

Some terminology, that you understand what I speak of

| Term | Description |

|---|---|

| waiver | A waiver or ignore rule is something you can configure in your vulnerability scanner to ignore known vulnerabilites |

| waiver request | An entity which is a request to allow my software (impacted artifact (version)) to use a infected library (version) |

| impacted artifact | The software which consumes/depends on a 3rd party library (usually the software we develop |

| infected library | The vulnerable/infected 3rd party library used by the impacted artifact version |

For example each of these example is a different waiver:

MySoftware:v1.0.0- consumesTheInfectedLibrary:v1.0.0MySoftware:v1.0.1- consumesTheInfectedLibrary:v1.0.0

The example above shows that you have allowed MySoftware:v1.0.0 with the known vulnerable library. Now developers have updated the MySoftware:v1.0.1 which still uses the same vulnerable library. Now the question is whether that is still ok cause

- maybe there is a new library which is not infected but the developer did not consider to use it

- the use of the library significantly changed so that the library now poses a greater risk

- …

So depending on the assessment it’s fine to waive MySoftware:v1.0.1 + TheInfectedLibrary:v1.0.0 or not.

Assess the vulnerabilities

As you have seen the assessment is the important part here and usually the part of the “process” which cannot be automated. Am assessment needs time by a developer to properly analyse the usage and impact of such a vulnerability. So a developer shall answer some important questions

- what is the vulnerability and is there already a new version available?

- does the vulnerability affect us, the way we use the library in our impacted artifact (version)?

- how is our impacted artifact (version) used? is it internet facing or only internally used?

- are there already mitigations in place (in the software, or infrastructure) which lower the probability of taking advantage of the vulnerability?

- does the impacted artifact (version) process sensitive data?

- …

All these questions have to be answered in order to be able to assess the severity and impact on the company. Only with this information one can consider whether to waive the infected library (version).

My proposal for a solution

Considering we have different environment: DEV, UAT (Pre-Prod) and PRD we can come up with some ground rules

- Developers (and CI) runs in DEV, so it shall be possible to use libraries regardless, but developers shall be informed if an infected library is used

- IN UAT (Pre-Prod) we allow download of impacted artifact, so we can run integration/acceptance tests

- In PRD a deployment of an impacted artifact is not allowed, unless there is an approved waiver

Maturity of artifacts

To be able to implement different behaviour of the environments we also have to consider different maturity levels of the artifacts. The idea is simple

- for each maturity level of the artifacts there is a separate repo (per technology)

- to considered for the next maturity level, an artifact has to pass some quality gates

- the respective environments can only pull artifacts from the maturity level e.g. in PRD environment only artifacts can be pulled which passed all quality gates for production readyness

To get this working we need two thing. First repositories representing the maturity levels for example:

| Maturity Level | Description | Repo Identifier | Example Reps |

|---|---|---|---|

| Snapshot/Development | Artifacts created from all branches | -dev | local-maven-dev, local-docker-dev |

| Ready for Integration Testing (SIT) | Artifacts created from “main” or “release” branch | -sit | local-maven-sit, local-docker-sit |

| Ready for Pre-Production | Artifact prompted from previous maturity level e.g. integration tests passed | -uat | local-maven-uat, local-docker-uat |

| Ready for Production | Artifact prompted from previous maturity level | -prd | local-maven-prd, local-docker-prd |

the amount of maturity levels and the quality gates are specific to your needs, so the above shall be considered as an example not as a recommendation

So once we structured our artifact repositories accordingly, we have to ensure that the respective repositories are only available where they should. For example the “dev” repository is available in DEV but not in UAT or PRD. On the other hand deploying from “Ready for Production” repo in DEV is not an issue and shall be possible. Ultimately you have to implement that by

- configure your CD in a way that this is reflected

- configure docker daemon so that in a PRD cluster only PRD artifacts can be pulled

- …

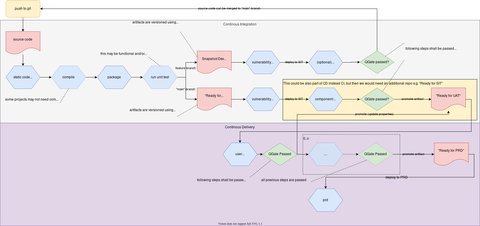

The further you also need to adjust your pipelines so that they can promote artifacts from one maturity stage to another. Conceptually this looks as follows:

Note the following:

- blue hexagon shapes represent activities which happens on the source code - depending of the project, not all may apply e.g.

compilation is not necessary for script projects or where there are only config files

theoretically all levels of the test pyramid should be covered: unit, component and system

however unit tests may not apply especially if the project only contains configurations which are deploy. Still one can think of doing some syntax check instead of running unit tests, this would very well qualify as non-functional unit tests

the “quality” of the activities is in the responsibility of the teams, this can be hardly checked e.g. whether there are only 10 or 100 unit tests executed we cannot qualify - 10 may be enough….

if any of these processing steps does not complete with success, processing will stop at this point and the relevant quality gate will not be reached.

- green diamond shapes represent a quality gate

- the quality gate is a technical implementation which checks whether certain blue hexagons were actually successfully executed

- there may be different quality gates e.g. one which requires all blue hexagons to be executed, another where unit tests are not required

- there are different flows for the branches

- artifacts from feature branches are only kept temporary and can be thrown away after, there is no promotion to further stages will not happen

mainbranch can bemain,releases,hotfixes, whatever your git flow is- deployment to SIT could be part of CI or CD, this also depends on your setup

- As CI refers usually to “automated build and test” it shall include automated integration testing

- promotion of the artifact shall happen automatically if the “QGate” criteria is met

- promotion of an artifact from one repo to another - and the quality gates - have to be implemented somewhere, somehow - probably in the CI and CD pipeline. This also means you have additional requirements for your ci/cd solution.

I don’t go into technical details here cause it heavily depends on your tooling and it’s not really relevant at this point - I focus to show the concept/idea.

Waiver request workflow

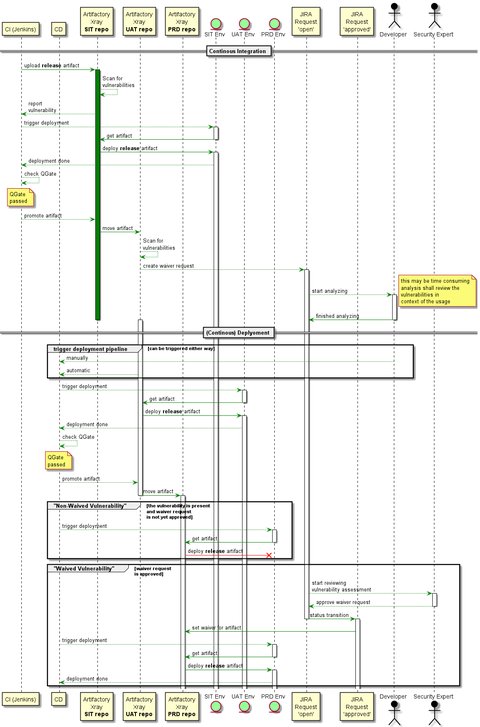

Below you see the concept of the waiver request flow:

Some important things to mention:

- artifacts are always scanned at build time, so that developer are aware of any vulnerabilities before they create a release artifact

- when a release artifact is uploaded to the artifact repo, it is scanned for vulnerabilities

- as thy developer was aware for the vulnerability (1. point) but did not fix it calls for potential waiver

- for these vulnerabilities a waiver request is reported automatically - for us it’s a ticket in JIRA

- the developer has to provide a detailed analysis for the all vulnerabilities present in the infected library (version) used by the impacted artifact - I mentioned above what to consider

- I specifically say all as you deploy the infected library (version) as a whole incl. all present vulnerabilities

- The analysis and justification for the waiver shall be clearly described so an independent person can verify it and make a decisions about

- once the request is reviewed and approved, the waiver is automatically created vulnerability scanner - in Xray it’s called

Ignore Rules

- creating the waiver in the tooling, then ensures the artifact can be downloaded

Conclusion

There are a lot of small details in the real implementation of such an “automated” process which heavily depends on your tooling. Therefore I recommend to properly consider and evaluate your needs/requirements for your tools. If you already have your tool set you may come up with a specific implementation offered by your tools - as we did at my employer e.g. JIRA, XRay and Jenkins as CI are currently a given fact.

Regardless the detailed implementation, what I meant to show with that posts, that it is essential your software/artifacts are always scanned for vulnerabilities and you have something in place which

- gives you control on which vulnerable artifacts are deployed and what are the risks in doing so

- forces the developers to properly analyse vulnerabilities, rather than ignoring them

I also believe, ensuring you have an appropriate structure in your artifact repositories which reflect the maturity, is only benficial. It helps you to

- separate high quality from low quality software/artifacts

- ensure only high quality artifacts are deployed in PRD

- implement aggressive cleanup policies - e.g. “development” artifacts which never are ready for production, could be thrown away after few days, whereas released artifacts are kept longer